A few years ago, some concerned citizens of TCS, like Sanjeev Arora and Bernard Chazelle, came up with this idea to promote the applications of algorithmic ideas more widely. Chazelle’s essay The Algorithm: Idiom of Modern Science is an example of this (with lots of nice pictures). The name for this world view seems to be “The Algorithmic Lens”.

I like the way that sounds, but it makes me imagine how kids will scorch ants with a magnifying glass. Maybe that is not the best mental imagery to frame interdisciplinary research.

This post is about an application of algorithmic thinking in health metrics. I hope I don’t burn the public health docs with my highly focused beam of algorithms. (That reminds me, if you can’t understand what I’m talking about, and want to, you can leave a comment with questions. I’ll answer. It’s likely that no one else understands what I’m talking about either.)

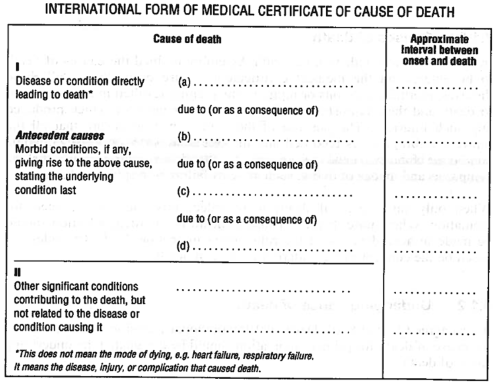

A big question in health metrics, and one that sounds pretty basic, is this: how do people die? (Oh, btw, working in health metrics has been great for my party conversation.) When I say “how do people die?”, I mean what fraction of deaths are due to what causes. To start with an easy case, let’s just talk about how people die in countries like the US and Canada, where there are pretty complete information, stored in a vital registration system. This vital registration system has a record for most everyone who dies, and each death certificate has cause-of-death information that is recorded by a doctor, and coded according to the International Statistical Classification of Diseases and Related Health Problems. It might look something like this:

The idea is that a doctor will record the direct cause of death and then intermediate causes of death, going all the way back to some single root cause of death. Filled out, it might look like this:

| I. | (a) | Pulmonary embolism | hours |

| (b) | Pathological fracture of Femur | 2 days | |

| (c) | Secondary malignant neoplasm (femur) | 2 months | |

| (d) | Malignant neoplasm of breast (nipple) | 1 year | |

| II. | Essential hypertension | 5 years | |

| Obesity | 10 years |

A theorist might think that with this data in hand, calculating how people die reduces to an already solved problem, usually called addition. Unfortunately, it’s not so simple. There are plenty of death certificates that include obviously incorrect cause of death information. For example,

| I. | (a) | Acute myocardial infarction | 1 hour |

| (b) | Essential Hypertension | 1 year | |

| (c) | Diabetes mellitus 2 | 14 years | |

| (d) | Hypothyroidism | 24 hours |

There is a problem here, because the intermediate cause (c) lasted 5000 times longer than the root cause (d). There are other obviously wrong lists, for example where the condition directly leading to death is non-fatal, like obesity. And these are only the forms that are obviously wrong. There are possibly more errors that we can’t identify so easily. Doctors have studied the sources of error in these forms, and, according to Rafael Lozano (a prof here at IHME), “Consensus attribute errors to house staff inexperience, fatigue, time constraints, unfamiliarity with the deceased, and perceived lack of importance of the death certificate.”

We would really like to correct for these errors. How to do it?

The first-pass approach is proportional redistribution. The US and Canada had about 2M obviously wrong records in 2002, which is about 15% of all of them. Proportional redistribution says assume that the 85% of the not-obviously-wrong records have the correct frequencies of deaths, and thus the correct number of deaths by cause can be obtained using another classic tool of public policy mathematics, multiplication.

But when you show doctors the results of the proportional redistribution data correction, they don’t like them. It produces corrections that experts don’t find believable. And here is where the algorithmic lens can help.

What we’ve been describing so far is a classic problem in error correction. We have a signal, the true cause of death, and it is transmitted through a noisy channel (a medical doctor), and then we receive it. Viewed this way, the doctor is a noise machine. So it’s not surprising that proportional redistribution does a bad job, since it’s designed for a very different kind of noise machine.

When would proportional redistribution be a good idea? If the way the noise machine (doctor) screwed up the data was by flipping a coin and 15% of the time replacing the true data with something chosen uniformly at random.

I think I can cook up a better model of the noise machine. I’d make it look more like edit distance, where the condition list is corrupted by some random perturbations, like reversing the list order, deleting an item, and transposing two items. I hope I’ll have a chance to dig into this more in the future, but with a model like this, I think maximum likelihood estimation is computationally equivalent to solving a pretty large system of linear equations. As an added bonus, for a lot of noise machines I’ve been thinking of, the linear system will be diagonally dominant, which means that I can cash in on some of the fancy algorithms for solving these systems.

Dr. Noise Machine, M.D.

By the way, does all of this sound creepy? Here is a letter from the State of Washington to the bereaved, titled Why do death certificates need so much information?

There is a recent paper on the arxiv uses death certificates from France 2005 as an example application: Vivian Viallon, Onureena Banerjee, Gregoire Rey, Eric Jougla, Joel Coste An empirical comparative study of approximate methods for binary graphical models; application to the search of associations among causes of death in French death certificates.

Pingback: Algorithms in the Field Workshop | Healthy Algorithms