I just got back from a very fun conference, which was the culmination of some very hard work, all on the Verbal Autopsy (which I’ve mentioned often here in the past).

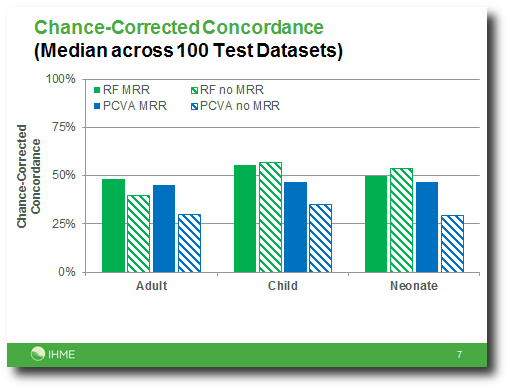

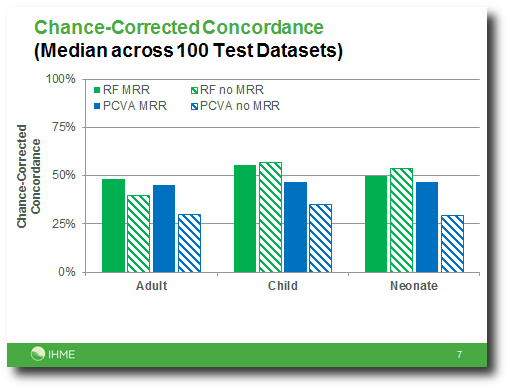

In the end, we managed to produce machine learning methods that rival the ability of physicians. Forget Jeopardy, this is a meaningful victory for computers. Now Verbal Autopsy can scale up without pulling human doctors away from their work.

Oh, and the conference was in Bali, Indonesia. Yay global health!

I do have a Machine Learning question that has come out of this work, maybe one of you can help me. The thing that makes VA most different from the machine learning applications I have seen in the past is the large set of values the labels can take. For neonatal deaths, for which the set is smallest, we were hoping to make predictions out of 11 different causes, and we ended up thinking that maybe 5 causes is the most we could do. For adult deaths, we had 55 causes on our initial list. There are two standard approaches that I know for converting binary classifiers to multiclass classifiers, and I tried both. Random Forest can produce multiclass predictions directly, and I tried this, too. But the biggest single improvement to all of the methods I tried came from a post-processing step that I have not seen in the literature, and I hope someone can tell me what it is called, or at least what it reminds them of.

For any method that produces a score for each cause, what we ended up doing is generating a big table with scores for a collection of deaths (one row for each death) for all the causes on our cause list (one column for each cause). Then we calculated the rank of the scores down each column, i.e. was it the largest score seen for this cause in the dataset, second largest, etc., and then to predict the cause of a particular death, we looked across the row corresponding to that death and found the column with the best rank. This can be interpreted as a non-parametric transformation from scores into probabilities, but saying it that way doesn’t make it any clearer why it is a good idea. It is a good idea, though! I have verified that empirically.

So what have we been doing here?