It’s been three weeks and one IHME retreat since I wrote about matching algorithms and virginity pledges, and I think I now understand what’s going on in Patient Teenagers well enough to describe it. I’ll try to give a stylized example of how the minimum-weight perfect matching algorithm makes itself useful in reproductive health research.

I think it’s helpful to focus on a concrete research question about the virginity pledge and its effects on reproductive health. Here’s one: “does taking the pledge reduce the chances that an individual contracts trichomoniasis?” If the answer is yes, or if the answer is no, people can still argue about the value of the virginity pledge programs, but this seems like relevant information for decision making.

I think it’s helpful to focus on a concrete research question about the virginity pledge and its effects on reproductive health. Here’s one: “does taking the pledge reduce the chances that an individual contracts trichomoniasis?” If the answer is yes, or if the answer is no, people can still argue about the value of the virginity pledge programs, but this seems like relevant information for decision making.

The only way to really answer to this question is to run a randomized controlled experiment, which might be possible, but is probably not easy, and is probably not cheap. No such experiment has been conducted, and questions like this are still interesting, so people are going to try to answer it with whatever non-random data they have at hand. In Patient Teenagers, Janet took data from the National Longitudinal Study of Adolescent Health, which asked respondents “Have you ever signed a pledge to abstain from sex until marrige?”.

If someone with this data was interested in promoting a culturally conservative political agenda they wouldn’t need an advanced technique like matching. AddHealth went back to the survey respondents 5 years later, collected urine from all of them, and tested it for trichomoniasis. So the culture warrior could compare prevalence directly between the group that took the pledge, and the group that did not. And 2% of the pledgers tested positive, compared to 3% of the non-pledgers. Start writing that press release, because virginity pledges save! Or not… 17% of the pledgers said they were Asian, while only 10% of the non-pledgers did. Follow-up press release idea: virginity pledges make you say that you’re Asian!

This is where matching comes in. The trouble with all of this observational data is “confounding”: there are important factors which could both cause people to take virginity pledges and to not get trich (like being Asian, possibly, or growing up in a culturally conservative household). Matching is the cool way to try to control this confounding. As Aram pointed out in my failed crowd-sourcing experiment, this doesn’t necessarily mean minimum-weight perfect matching, and would probably be less confusingly called “pruning”. The goal is to choose a subset of the students who took the pledge and a subset of the students who didn’t, so that the distributions on potentially confounding covariates are matched as closely as possible. Matching/pruning does include minimum-weight perfecting matching, as well as minimum k-factors. It seems like greedy minimal matchings are more commonly used though. This could be due to the code that is currently available to stats hackers, or could be because minimizing the total weight is not exactly the goal in matching/pairing.

I’m going to make two little models of what might be going on here. They will have two confounding factors, call them “parent religiosity index” and “negative expectations about sex index”. Actually, just call them x and y. As you might guess, the higher an individual is on either of these dimensions, the more likely they are to take a virginity pledge. In both models, the probability of an individual getting trich also depends on where they are positioned. But here is where the models differ. In the pledge-is-good model (Model PG), the probability of contracting the STI is lower for pledgers than for non-pledgers. But in Model R (the model corresponding more to reality, according to Janet’s paper), the STI probability is the same for pledgers and non-pledges at the same (x,y) position. To make things a little clearer, the model world will have trich prevalence about 10 times higher than observed in AddHealth. Sorry, model world!

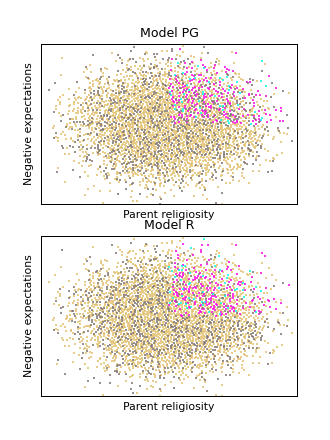

Here’s a picture:

Each point represents a study subject. Purple and aqua have taken pledge, salmon and grey have not; aqua and grey have STI, purple and salmon do not.

Without any matching, both of these models show that pledgers have prevalence about 20% and non-pledgers have prevalence about 30%. But in the PG version of this model, the difference is due to the virginity pledge, while in the R version, it is caused by negative expectations and parent religiosity.

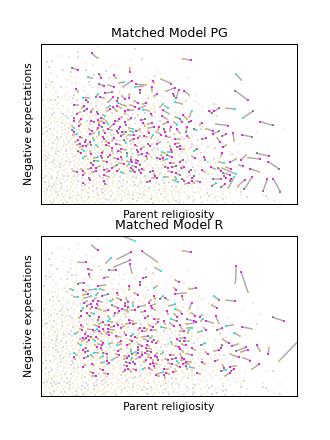

Matching/pruning can tell. Here is a minimum-weight bipartite matching from the pledgers to the non-pledgers:

As above, each point represents a study subject. Purple and aqua have taken pledge, salmon and grey have not; aqua and grey have STI, purple and salmon do not. Pledgers and non-pledgers have been matched to minimize the total euclidean distance of the pairing.

After matching, the model PG maintains a difference in prevalence (as it should), but in model R, the difference has vanished (in fact, 20% of the pledgers have trich, but only 15% of the matched non-pledgers do). I made a big version of the picture, if you want a better look.

I’ll follow this up soon with a tutorial on how to actually do matchings in Python (there are many fun and easy ways). But the real challenge is coming up with the weights to minimize (above, I used the euclidean distance). My initial impression is that this is more of an art than a science, but I hope to learn more about it soon, and when I do, I’ll report back.

I gather that you artificially constructed models PG and R to have the desired properties (they’re not real data)? Also, it took me a while to understand how to interpret the matchings: it’s not that anything visually obvious leaps out, but that the matching pairs up a pledge and a non-pledge point that are as similar as possible in the confounding factors, and then you compare the STI probabilities for the whole pledge set and the matched non-pledge set. Presumably one could also make such comparisons within regions of the confounding space, to try to discover, say, whether pledges are useful for teenagers with few negative expectations but make no difference for those with strongly negative expectations.

Hi Greg,

Yeah, exactly as you said, these are artificially constructed examples. In Model PG, taking the virginity pledge causes an individual’s chance of disease to go down. In Model R, being in the upper-right quadrant causes an individal’s chance to go down, and makes them more likely to take the pledge.

I haven’t heard of people using matching to dissect things in this way, but it sounds totally reasonable, if there is enough data. (I increased the disease prevalence by 10x because it was hard to tell what going on even in simulations at the more realistic 2-3% rates.)