Cool paper, cool idea, ICYMI:

Click to access 636.full.pdf

From: Mabry, Patricia L

Sent: Thursday, January 14, 2016 5:51 AM

Subject: [iuni_systems_sci-l] Article of interest: reusable holdout method

Dwork, C., Feldman, V., Hardt, M., Pitassi, T., Reingold, O., & Roth, A. (2015). The reusable holdout: Preserving validity in adaptive data analysis.Science, 349(6248), 636-638.

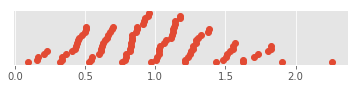

Misapplication of statistical data analysis is a common cause of spurious discoveries in

scientific research. Existing approaches to ensuring the validity of inferences drawn from data

assume a fixed procedure to be performed, selected before the data are examined. In common

practice, however, data analysis is an intrinsically adaptive process, with new analyses

generated on the basis of data exploration, as well as the results of previous analyses on the

same data. We demonstrate a new approach for addressing the challenges of adaptivity based

on insights from privacy-preserving data analysis. As an application, we show how to safely

reuse a holdout data set many times to validate the results of adaptively chosen analyses.

http://science.sciencemag.org/content/349/6248/636.full-text.pdf+html