I’ve got a fun little project that has let me check in on the PyMC project after a long time away. Long-time readers of Healthy Algorithms might remember my obsession with PyMC2 from my DisMod days nearly ten years ago, but for those of you joining us more recently… there is a great way to build Bayesian statistical models with Python, and it is the PyMC package. It was completely rewritten for version 3, which is now available, and using it looks like this:

import numpy as np

import matplotlib.pyplot as plt

# Initialize random number generator

np.random.seed(123)

# True parameter values

alpha, sigma = 1, 1

beta = [1, 2.5]

# Size of dataset

size = 100

# Predictor variable

X1 = np.random.randn(size)

X2 = np.random.randn(size) * 0.2

# Simulate outcome variable

Y = alpha + beta[0]*X1 + beta[1]*X2 + np.random.randn(size)*sigma

from pymc3 import Model, Normal, HalfNormal

basic_model = Model()

with basic_model:

# Priors for unknown model parameters

alpha = Normal('alpha', mu=0, sd=10)

beta = Normal('beta', mu=0, sd=10, shape=2)

sigma = HalfNormal('sigma', sd=1)

# Expected value of outcome

mu = alpha + beta[0]*X1 + beta[1]*X2

# Likelihood (sampling distribution) of observations

Y_obs = Normal('Y_obs', mu=mu, sd=sigma, observed=Y)

basic_model.logp({'alpha':1,

'beta':[1,1],

'sigma_log_':0})

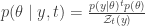

What I need is a way to compute the “power-posterior” of a model, which is to say  .

.

I’ve figured out a pretty cool way to compute the prior and the likelihood of the model:

from theano import theano, tensor as tt

def logpriort(self):

"""Theano scalar of log-prior of the model"""

factors = [var.logpt for var in self.free_RVs]

return tt.add(*map(tt.sum, factors))

def logprior(self):

"""Compiled log probability density function"""

return self.model.fn(logpriort(self))

def logliket(self):

"""Theano scalar of log-prior of the model"""

factors = [var.logpt for var in self.observed_RVs] + self.potentials

return tt.add(*map(tt.sum, factors))

def loglike(self):

"""Compiled log probability density function"""

return self.model.fn(logliket(self))

Now I just need to put them together, to get the log of the power-posterior. There must be an easy way to do it, but this is not it:

def logpowerpt(self, t):

return t*loglike(self) + logprior(self)

def logpowerp(self, t):

return self.model.fn(logpowerpt(self, t))

vals = {'alpha':1,

'beta':[1,1],

'sigma_log_':0}

logpowerp(basic_model, 0)(vals)

Any suggestions?