If I was still tweeting, I’d tweet this to you: a nice post about David Blackwell and his math on Higher Cohomology is Inevitable.

That Bayes Factor

A year or more ago, when I was trying to learn about model selection, I started writing a tutorial about doing it with PyMC. It ended up being more difficult than I expected though, and I left it for later. And later has become later and later. Yet people continue landing on this page and I’m sure that they are leaving disappointed because of its unfinishedness. Sad. But fortunately another tutorial exists, Estimating Bayes factors using PyMC by PyMC developer Chris Fonnesbeck. So try that for now, and I’ll work on mine more later (and later and later).

There is also an extensive discussion on the PyMC mailing list that you can turn to here. It produced this monte carlo calculation.

Filed under MCMC, statistics

Ancient Probability

I had a need to look in the first-ever probability textbook this weekend. It isn’t really ancient, but it is definitely old. And it’s all on the Internet Archive as a pdf. Good times.

Abraham de Moivre, The doctrine of chances: or, A method of calculating the probabilities of events in play (1756)

Filed under education

Random Forest Verbal Autopsy Debut

I just got back from a very fun conference, which was the culmination of some very hard work, all on the Verbal Autopsy (which I’ve mentioned often here in the past).

In the end, we managed to produce machine learning methods that rival the ability of physicians. Forget Jeopardy, this is a meaningful victory for computers. Now Verbal Autopsy can scale up without pulling human doctors away from their work.

Oh, and the conference was in Bali, Indonesia. Yay global health!

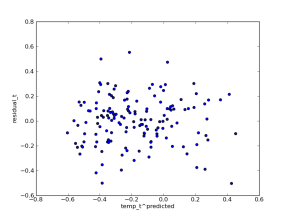

I do have a Machine Learning question that has come out of this work, maybe one of you can help me. The thing that makes VA most different from the machine learning applications I have seen in the past is the large set of values the labels can take. For neonatal deaths, for which the set is smallest, we were hoping to make predictions out of 11 different causes, and we ended up thinking that maybe 5 causes is the most we could do. For adult deaths, we had 55 causes on our initial list. There are two standard approaches that I know for converting binary classifiers to multiclass classifiers, and I tried both. Random Forest can produce multiclass predictions directly, and I tried this, too. But the biggest single improvement to all of the methods I tried came from a post-processing step that I have not seen in the literature, and I hope someone can tell me what it is called, or at least what it reminds them of.

For any method that produces a score for each cause, what we ended up doing is generating a big table with scores for a collection of deaths (one row for each death) for all the causes on our cause list (one column for each cause). Then we calculated the rank of the scores down each column, i.e. was it the largest score seen for this cause in the dataset, second largest, etc., and then to predict the cause of a particular death, we looked across the row corresponding to that death and found the column with the best rank. This can be interpreted as a non-parametric transformation from scores into probabilities, but saying it that way doesn’t make it any clearer why it is a good idea. It is a good idea, though! I have verified that empirically.

So what have we been doing here?

Filed under TCS

Introducing the H-RAM

I haven’t had time to say much recently, due to some travel for work, but I did have a chance to prototype the Hit-and-Run/Adaptive Metropolis approach to MCMC that I mentioned in my last post. Was that really three weeks ago? How time flies.

Anyway, thanks to the tip Aram pointed out in the comments, Hit-and-Run can take steps in the correct direction without explicitly computing an approximation of the covariance matrix, just by taking a randomly weighted sum of random points. It’s nearly magic, although Aram says that it actually makes plenty of sense. The code for this “H-RAM” looks pretty similar to my original Hit-and-Run step method, and it’s short enough that I’ll just show it to you:

class HRAM(Gibbs):

def __init__(self, stochastic, proposal_sd=None, verbose=None):

Metropolis.__init__(self, stochastic, proposal_sd=proposal_sd,

verbose=verbose, tally=False)

self.proposal_tau = self.proposal_sd**-2.

self.n = 0

self.N = 11

self.value = rnormal(self.stochastic.value, self.proposal_tau, size=tuple([self.N] + list(self.stochastic.value.shape)))

def step(self):

x0 = self.value[self.n]

u = rnormal(zeros(self.N), 1.)

dx = dot(u, self.value)

self.stochastic.value = x0

logp = [self.logp_plus_loglike]

x_prime = [x0]

for direction in [-1, 1]:

for i in xrange(25):

delta = direction*exp(.1*i)*dx

try:

self.stochastic.value = x0 + delta

logp.append(self.logp_plus_loglike)

x_prime.append(x0 + delta)

except ZeroProbability:

self.stochastic.value = x0

i = rcategorical(exp(array(logp) - flib.logsum(logp)))

self.value[self.n] = x_prime[i]

self.stochastic.value = x_prime[i]

if i == 0:

self.rejected += 1

else:

self.accepted += 1

self.n += 1

if self.n == self.N:

self.n = 0

Compare the results to the plain old Hit-and-Run step method:

Filed under MCMC

MCMC in Python: PyMC Step Methods and their pitfalls

There has been some interesting traffic on the PyMC mailing list lately. It seems that there is a common trouble with the “Adaptive Metropolis” step method, and it’s failure to converge. I’ve been quite impressed with this approach, and I haven’t had the problems that others reported, so I started wondering: Have I been lucky? Have I been not looking carefully?

I decided to do some experiments to make Metropolis and Adaptive Metropolis shine, and since I’m an aspiring math filmmaker these days, I made my experiments into movies.

I consider the Metropolis step method the essence of MCMC. You have a particular point in parameter space, and you tentatively perturb it to a new random point, and then decide to accept or reject this new point with a carefully designed probability, so that the stationary distribution of the Markov chain is something you’re interested in. It’s magic, but it’s good, simple magic.

Here is the simplest example I could come up with, sampling from a uniform distribution on the unit square in the plane using Metropolis steps. Any self-respecting step method can sample from the unit square in two dimensions!

Reading Time

I’m supposed to be writing a lot right now. Papers, grants, documentation, there’s lots to write (blogs don’t count). But I’ve been a little bit blocked, so I’ve been reading instead. Since I spend so much time reading things where the idea is paramount and the prose is barely functional, I thought I’d mix it up and read some things that are well written just because they are well written. Maybe I’ll get inspiration from authors who put words together well.

On that note, I just finished up Slumberland, the latest novel by Paul Beatty. I like the idea that great writing is happening currently, and not just something in the “classics” section of the library.

Beatty writes epics about Black superheros, so there was no reason for me to expect math to make an appearance in his latest story. Its about an American deejay who moves to pre-unification Berlin with the perfect beat. That makes the math content here the exact opposite of what I complained about after I saw Salt last summer, where screenwriters made Angelina Jolie math-phobic for no reason.

It was quite a pleasant surprise when early on in the book, following some laugh-out-loud funny dialogue that I won’t even hint at here, the surprising results of a math test come out:

The scores were posted outside the classroom in descending order. It was the first computer printout I’d ever seen. There was something affirming about seeing my name and score—FERGUSON W. SOWELL: 100/100—at the top of the list in what was then a futuristic telex font. I felt official. I was real.

Thank you, Paul Beatty, for making your superhero a math whiz on the side.

Comments Off on Reading Time

Filed under education

Piggies and Mazes

All-time-most-popular post on healthyalgorithms: pictures of teacup pigs I copied from a long forgotten newspaper article

New hobby: making mazes with MCMC

2010 in review

The stats helper monkeys at WordPress.com mulled over how this blog did in 2010, and here’s a high level summary of its overall blog health:

The Blog-Health-o-Meter™ reads Wow.

Crunchy numbers

About 3 million people visit the Taj Mahal every year. This blog was viewed about 32,000 times in 2010. If it were the Taj Mahal, it would take about 4 days for that many people to see it.

In 2010, there were 52 new posts, growing the total archive of this blog to 116 posts. There were 39 pictures uploaded, taking up a total of 5mb. That’s about 3 pictures per month.

The busiest day of the year was August 27th with 430 views. The most popular post that day was MCMC in Python: Global Temperature Reconstruction with PyMC.

Where did they come from?

The top referring sites in 2010 were code.google.com, blog.computationalcomplexity.org, reddit.com, Google Reader, and math.cmu.edu.

Some visitors came searching, mostly for teacup pigs, tea cup pigs, teacup pig, math art, and pymc.

Attractions in 2010

These are the posts and pages that got the most views in 2010.

MCMC in Python: Global Temperature Reconstruction with PyMC August 2010

4 comments

Paper rejected, Cheer Up with Baby Animals November 2009

3 comments

MCMC in Python: PyMC for Bayesian Probability November 2008

5 comments

MCMC in Python: PyMC for Bayesian Model Selection August 2009

25 comments

Multilevel (hierarchical) modeling: what it can and cannot do in Python December 2009

16 comments

Filed under Uncategorized

MCMC in Python: Custom StepMethods and bounded-depth spanning tree distraction

I was looking for a distraction earlier this week, which led me to the world of stackexchange sites. The stack overflow has been on my radar for a while now, because web-search for coding questions often leads there and the answers are often good. And I knew that math overflow, tcs overflow, and even stats overflow existed, but I’d never really explored these things.

Well, diversion found! I got enamored with an MCMC question on the tcs site, about how to find random bounded-depth spanning trees. Bounded-depth spanning trees are something that I worked on with David Wilson and Riccardo Zechinna in my waning days of my MSR post-doc, and we came up with some nice results, but the theoretical ones are just theory, and the practical ones are based on message passing algorithms that still seem magical to me, even after hours of patient explanation from my collaborators.

So let’s do it in PyMC… this amounts to an exercise in writing custom step methods, something that’s been on my mind for a while anyways. And, as a bonus, I got to make an animation of the chain in action which I find incredibly soothing to watch on repeat: