I’m preparing for my first global travel for global health, but the net is paying attention to a paper that I think I’ll like, and I want to mention it briefly before I fly.

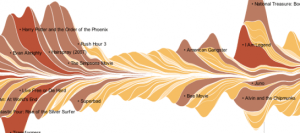

Computational Complexity and Information Asymmetry in Financial Products is 27 pages of serious TCS, but it is so obviously applicable that people outside of our particular ivory tower, and even outside of academia entirely are blogging and twittering about it, and even reading it!

Freedom to Tinker has a nice summary of this paper, if you want to know what it’s about in a hurry.

Mike Trick makes the salient observation that NP-hard doesn’t mean computers can’t do it. But the assumption that this paper is based on is not about worst-case complexity; it is, as it should be, based on an assumption about the average-case complexity of a particular optimization problem over a particular distribution.

As it turns out, this is an average-case combinatorial optimization problem that I know and love, the densest subgraph problem. My plan is to repeat the problem here, and share some Python code for generating instances of it. Then, you, me, and everyone, can have a handy instance to try optimizing. I think that this problem is pretty hard, on average, but there is a lot more chance of making progress on an algorithm for it than for cracking the P versus NP nut. Continue reading

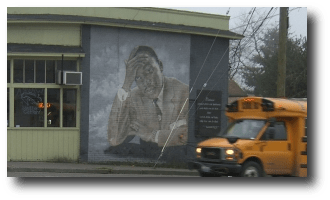

I pass by this mural of MLK almost every day, and I find it incredibly expressive. Maybe it has something to do with the Seattle weather. Today I thought he was looking tired, but hopeful.

I pass by this mural of MLK almost every day, and I find it incredibly expressive. Maybe it has something to do with the Seattle weather. Today I thought he was looking tired, but hopeful.